Research Project

Hybrid Architecture for Real-Time Query-Based Video Instance Segmentation

A real-time vision-language segmentation framework that combines heavy detection on key frames with lightweight mask propagation, achieving near-SOTA accuracy at 190+ FPS.

Project Overview

ConfTrackNet is a real-time vision-language segmentation framework designed to identify and segment a target object in video using a natural language query. Instead of performing expensive cross-modal reasoning on every frame, the framework intelligently combines accurate heavy detection on key frames with lightweight mask propagation on intermediate frames. This design enables strong segmentation accuracy while achieving real-time speed, making the model suitable for both desktop and edge deployment.

Research Problem

Existing query-based video segmentation methods usually apply the full transformer-based vision-language model to every frame. This gives strong accuracy, but it is too slow for real-time applications, often running well below 30 FPS. ConfTrackNet addresses this gap by introducing adaptive routing: it spends heavy computation only when necessary, and uses efficient tracking-style propagation for the rest of the video.

How the Model Works

Text Query Encoding

The input text query is encoded once using a frozen DistilBERT text encoder, so the language information can be reused across all frames — eliminating redundant NLP computation.

Heavy Key-Frame Detection

The first frame is processed by a heavy detection pathway built on Swin-B with cross-modal fusion and a transformer decoder, producing a high-quality initial segmentation mask.

Lightweight Propagation + Confidence Gate

For subsequent frames, a lightweight MobileViT-S pathway propagates the mask using previous mask, current-frame features, and text guidance. A confidence estimator decides whether to trust the result or re-trigger full detection.

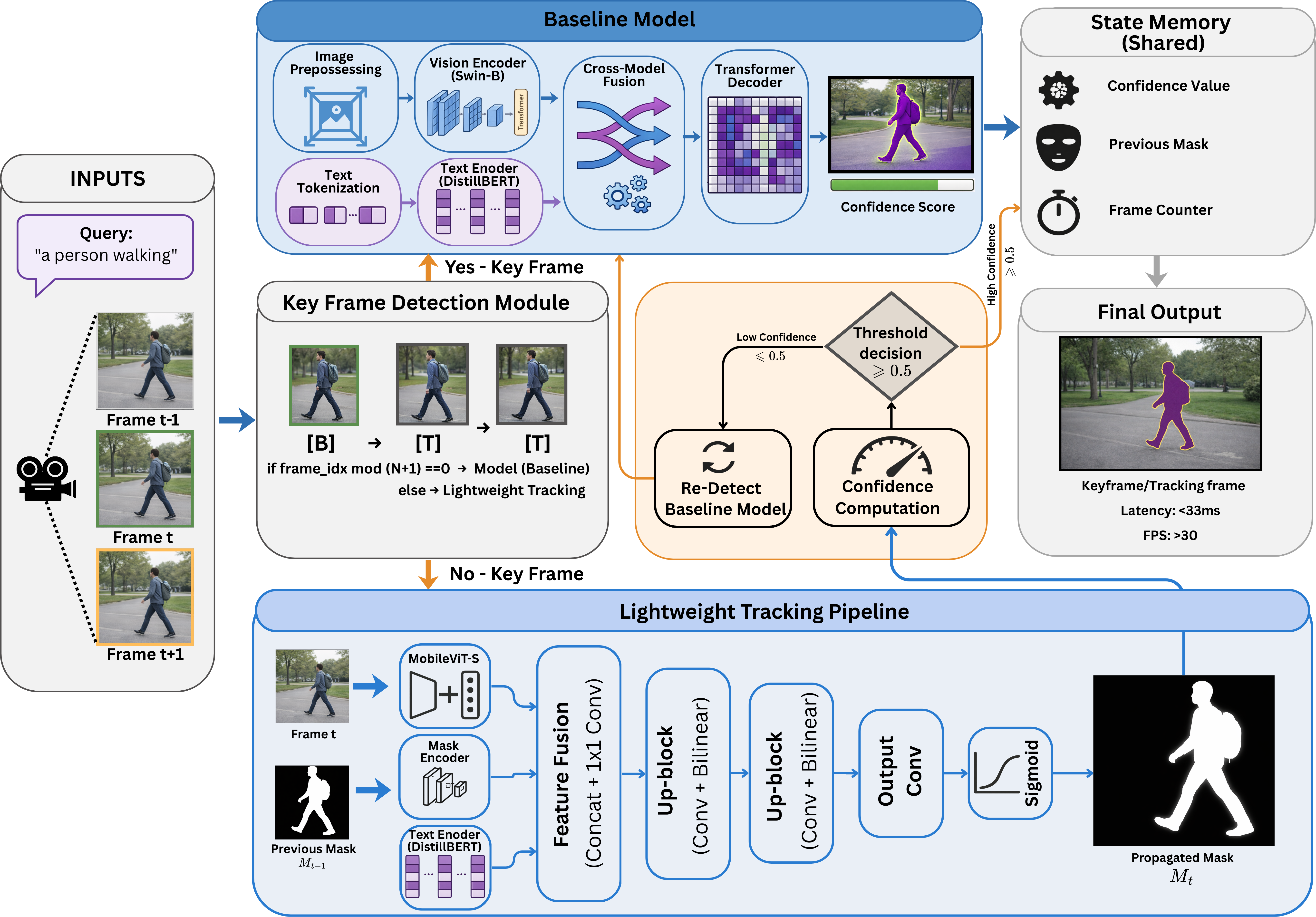

Model Architecture

Full pipeline of ConfTrackNet: the Baseline (heavy) detection pathway (top), Key Frame Detection Module with confidence-guided routing (middle), and the Lightweight Tracking Pipeline for intermediate frames (bottom).

Core Architecture Components

Five tightly integrated modules that together enable temporal consistency with minimal compute.

Text Encoder

Frozen DistilBERT that converts the natural language query into a shared embedding reused across all frames.

Heavy Detection Pathway

Swin-B backbone with cross-modal vision-language fusion and a transformer decoder for accurate key-frame segmentation.

Lightweight Propagation Pathway

Frozen MobileViT-S with a mask encoder, deformable mask warping module, and multi-scale decoder. Only 3.16M total parameters (0.64M trainable), running at 5.0 ms per frame.

Multi-Signal Confidence Estimator

Fuses three complementary signals — feature similarity, embedding consistency, and mask quality estimation — into a single reliability score.

Confidence-Guided Re-Detection Gate

Dynamically triggers full inference only when confidence falls below threshold, replacing expensive fixed-interval keyframe schedules.

What Makes It Different

The main novelty of ConfTrackNet is that it does not use a fixed key-frame schedule. Instead, it uses confidence-guided adaptive routing. The system dynamically decides whether to continue propagating the mask or to perform a fresh full detection. This is more effective than running heavy detection every N frames, because video difficulty changes over time. The paper demonstrates that adaptive routing achieves a significantly better balance between accuracy and speed than any fixed-interval keyframe selection strategy.

Propagation Design

The lightweight branch uses a frozen MobileViT-S backbone, a mask encoder, a learned deformable mask warping module, and a multi-scale decoder. It is the primary reason the model achieves real-time speed.

Confidence Estimation Mechanism

These three signals are fused into a single confidence score. Frames with low confidence automatically trigger re-detection via the heavy pathway. The full three-signal configuration achieved the best results in ablation studies.

Feature Similarity

Checks whether the object appearance remains visually consistent between frames.

Embedding Consistency

Checks whether the instance identity (as encoded by the text query) is preserved across the propagated mask.

Mask Quality Estimation

Checks whether the predicted mask spatially aligns well with the current frame's features.

Performance Results

~15.8× throughput improvement over the heavy baseline while preserving nearly identical segmentation quality

Ref-YouTube-VOS

Ref-DAVIS 2017

Zero-ShotQualitative Results

Side-by-side comparison of input frames, ground truth, and ConfTrackNet predictions on real benchmark videos.

Qualitative segmentation results on Ref-YouTube-VOS. Each block shows (top to bottom): input frames, ground truth masks, and ConfTrackNet predictions. The model accurately tracks and segments the queried object across challenging motion and occlusion scenarios.

Edge Deployment

A major strength of ConfTrackNet is practical deployment. The model maintains strong segmentation quality on embedded hardware, making it suitable for real-world edge AI and robotics applications where both accuracy and power efficiency are critical.

Live segmentation results running on NVIDIA Jetson AGX Orin at ~100 FPS under 30W. Each clip shows ConfTrackNet tracking and segmenting the queried object in real time.